Your brand's next customer might never visit Google. Instead, they'll ask ChatGPT, Claude, Gemini, or Perplexity for a recommendation – and the AI will either mention your brand or it won't. There is no second page of results, no paid ad placement, and no way to game the system with keyword tricks. AI visibility is earned through genuine authority, quality content, and strategic presence across the web. Here are five proven strategies to make sure your brand is the one AI recommends.

Why AI Visibility Is the New SEO

For years, SEO meant optimizing for Google's algorithm – building backlinks, targeting keywords, and improving page speed. Those fundamentals still matter for traditional search, but a parallel battlefield has emerged. AI assistants now answer billions of questions per month, and they do it by synthesizing information from across the web into direct, authoritative recommendations.

The critical difference is this: in traditional search, you can appear on page one alongside nine other results. In AI responses, the model typically recommends only three to five brands – and the top recommendation receives an outsized share of trust and follow-through. If your brand isn't in that shortlist, you are effectively invisible to a rapidly growing segment of your potential customers.

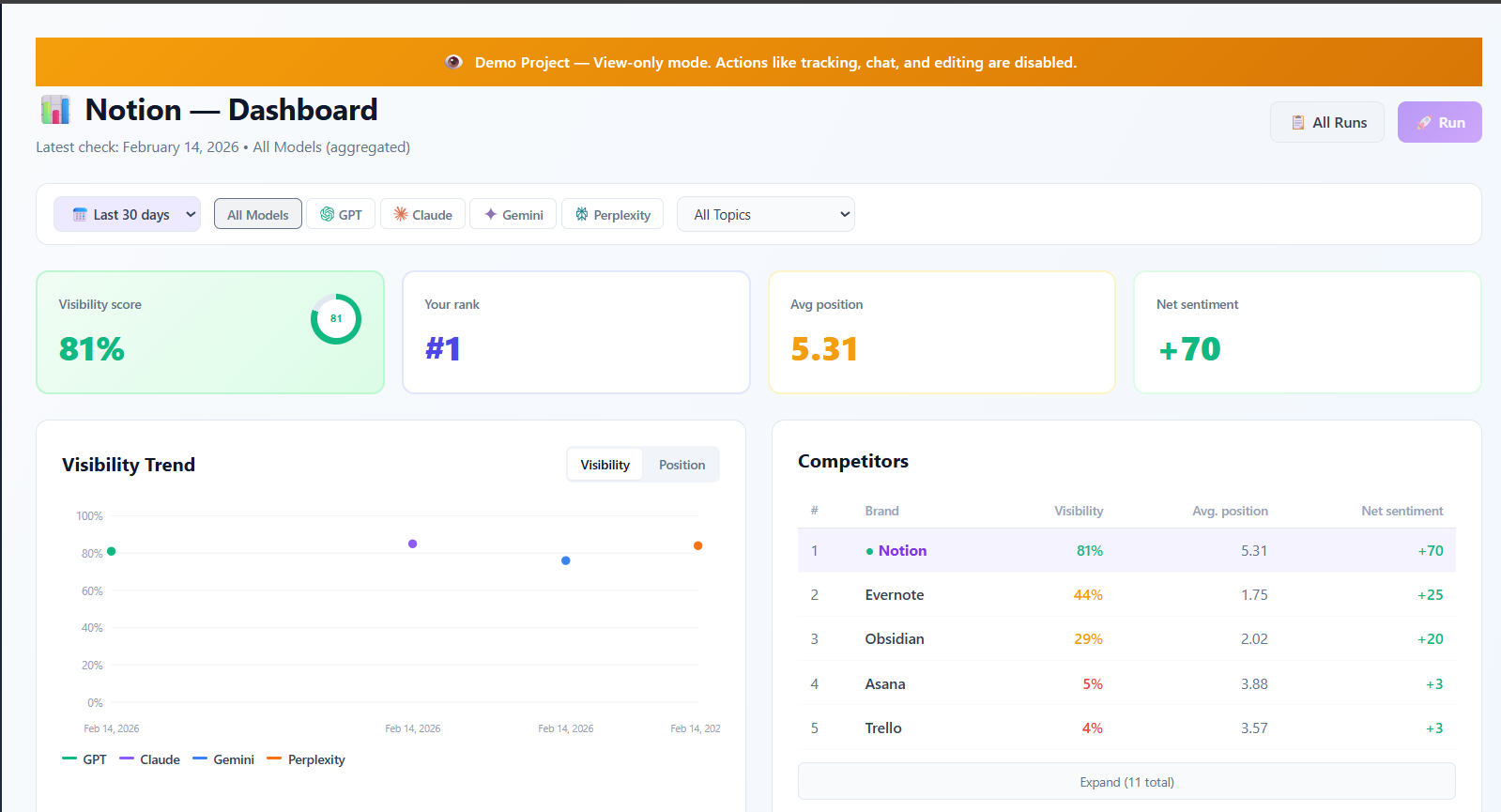

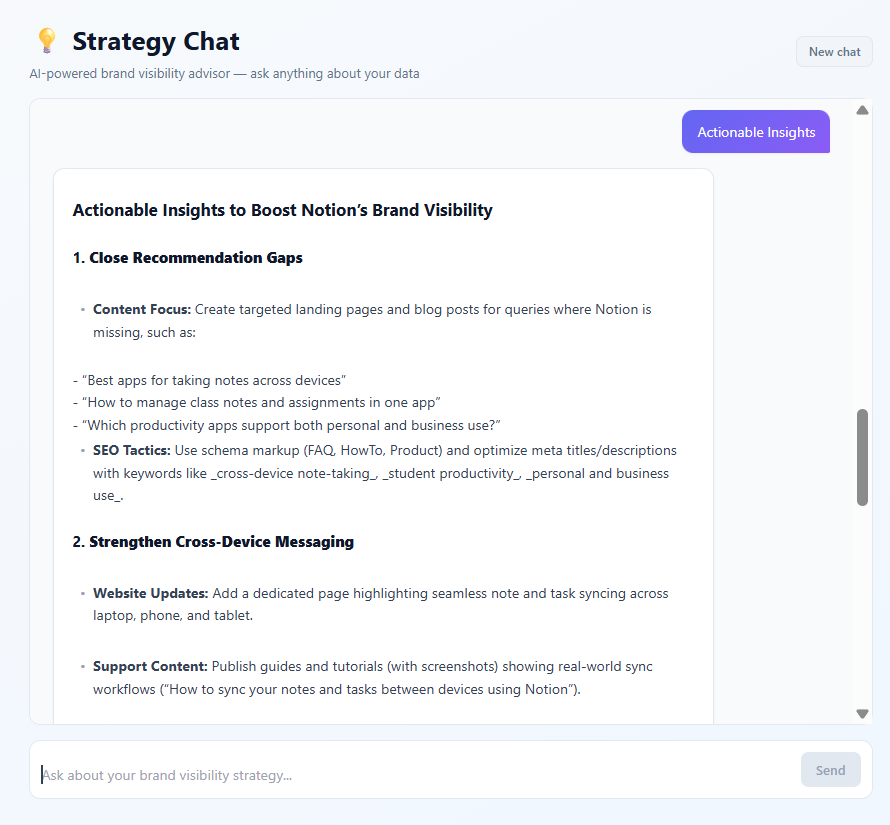

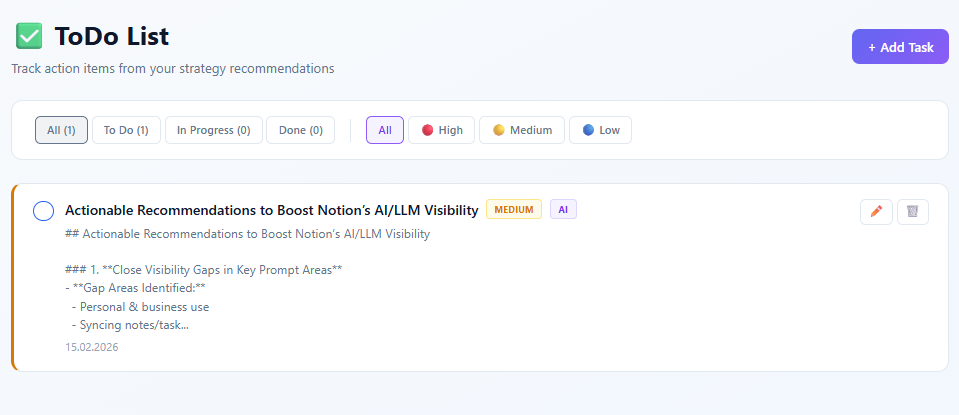

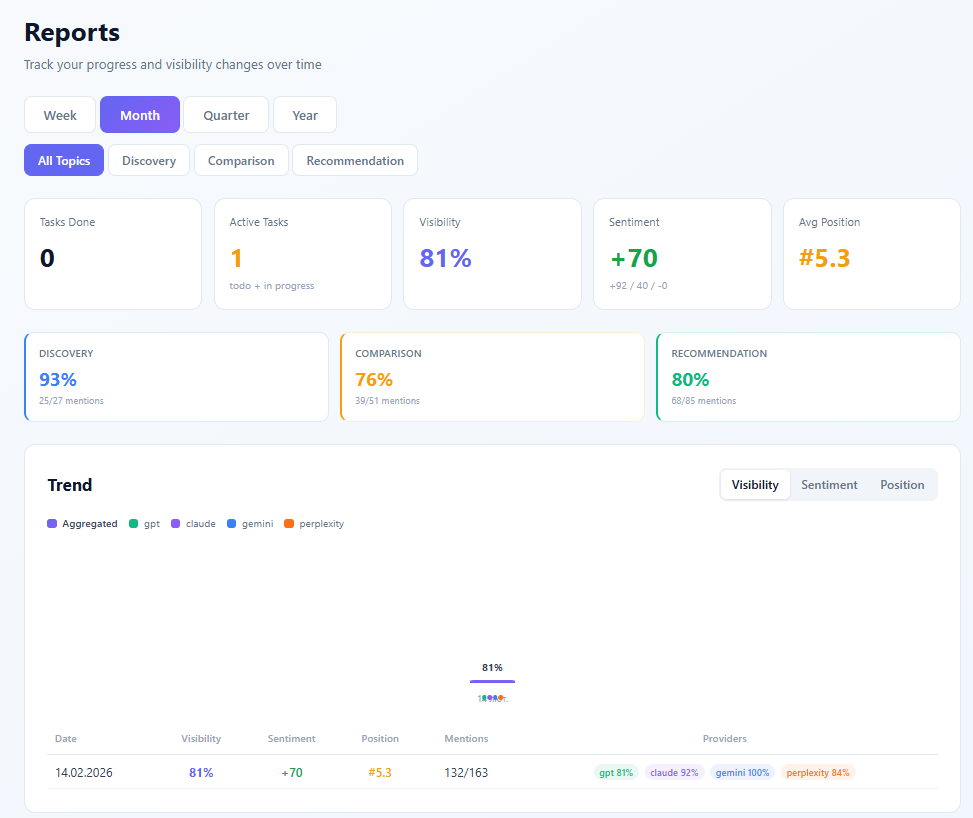

LLM Brand Boost helps you understand exactly where you stand – and what to do about it. By tracking your visibility score, average position, and brand sentiment across ChatGPT (GPT-5.2), Claude Sonnet, Gemini 2.0 Flash, and Perplexity Sonar, you get a clear picture of your AI brand health. But unlike tools that stop at data, LLM Brand Boost includes an AI Strategy Chat that analyzes your results and generates specific, data-driven recommendations, plus a built-in to-do list to manage execution and visual reports to track progress – all in one mobile-friendly dashboard.

1. Create Comprehensive, Structured Content

Why It Works

AI models are trained on vast amounts of web content, and they develop a strong preference for information that is well-organized, factual, and comprehensive. When an AI assistant needs to recommend a product in your category, it draws on patterns from thousands of articles, guides, and comparison pages. Content that is structured with clear headings, uses data to support claims, and covers topics thoroughly is weighted more heavily in the model's understanding of your brand.

Think of it this way: if five different authoritative sources describe your product with detailed features, use cases, and measurable outcomes, the AI has a rich foundation for recommending you. If your web presence consists of vague marketing copy and feature lists without context, the AI has little to work with – and will default to competitors who provide better information.

What to Do

- Create in-depth product comparison pages that honestly compare your product to alternatives. AI models value balanced, factual comparisons and frequently draw on them when answering comparison prompts.

- Publish comprehensive how-to guides that demonstrate your expertise and connect your brand to specific use cases. A guide like "How to Set Up Email Automation for E-Commerce" naturally associates your brand with that capability.

- Write detailed case studies with measurable results. Numbers matter. "Company X increased conversion rates by 34% using our platform" gives the AI concrete, citable data.

- Structure everything with clear H1/H2/H3 hierarchy. AI models parse content structure, and well-organized pages are easier for them to extract and reference.

- Include factual claims backed by data. Statements like "Trusted by 10,000+ companies" or "99.9% uptime SLA" give the AI specific facts to cite.

Tip: Focus especially on "comparison" and "recommendation" content – these prompt clusters drive the most brand mentions in AI responses. LLM Brand Boost's prompt clustering feature shows exactly which types of prompts are triggering (or missing) your brand.

2. Build Authority Across Multiple Sources

The Multi-Source Effect

AI models don't rely on a single source to form their recommendations. They cross-reference information from dozens – sometimes hundreds – of web pages. This means that being mentioned in one blog post, no matter how comprehensive, is not enough to consistently earn AI recommendations. What matters is the breadth and authority of your brand's presence across the web.

When multiple independent, authoritative sources all mention your brand positively in the context of a specific product category, the AI develops a strong signal that your brand is a legitimate, recommended option. This is similar to how traditional SEO valued backlinks from diverse domains, but the mechanism is more nuanced – the AI doesn't just count mentions, it evaluates the quality and context of each source.

Action Steps

- Get featured on industry review platforms like G2, Capterra, TrustPilot, and Product Hunt. These sites carry significant weight in AI training data because they aggregate real user opinions.

- Publish guest posts on authoritative industry blogs. An article about your approach to solving a common industry problem, published on a respected publication, creates a high-quality signal for AI models.

- Maintain up-to-date profiles on knowledge platforms – Crunchbase, LinkedIn Company Page, Wikipedia (if your brand qualifies), and industry-specific directories. AI models frequently reference these structured data sources.

- Contribute to technical documentation and open-source communities. If your product has integrations, make sure they are documented on both your site and partner sites.

- Earn media coverage and press mentions. Articles from recognized publications create authoritative signals that AI models weigh heavily.

LLM Brand Boost's source attribution feature shows you exactly which domains and URLs are driving your AI mentions. This means you can see whether your G2 profile, your blog, or a third-party review is the reason ChatGPT recommends you – and double down on what works.

3. Monitor and Optimize Across All AI Platforms

The Multi-Provider Gap

One of the most common mistakes brands make is assuming that visibility on one AI platform means visibility everywhere. In reality, the differences between platforms can be dramatic. A brand might achieve a 75% visibility score on ChatGPT – appearing in three out of every four relevant prompts – while scoring only 20% on Claude for the exact same prompts.

This happens because each AI platform is built differently. They use different training data, different model architectures, different fine-tuning approaches, and different safety and quality filters. A source that heavily influences ChatGPT's recommendations might have minimal impact on Gemini's responses. Understanding these platform-specific dynamics is essential for a complete AI visibility strategy.

Platform-Specific Insights

GPT-5.2 (OpenAI): OpenAI's flagship model favors comprehensive, well-structured content. It tends to provide detailed recommendations with clear reasoning. Brands with thorough documentation, strong comparison content, and wide web presence tend to perform well. GPT-5.2 often cites specific features and use cases when recommending products.

Claude Sonnet (Anthropic): Claude values nuanced, balanced comparisons and tends to present recommendations with more caveats and context. It often acknowledges trade-offs between options rather than declaring a single winner. Brands that invest in honest, balanced content – including acknowledging their own limitations – tend to be represented more favorably by Claude.

Gemini 2.0 Flash (Google): Gemini leverages Google's massive search index, which means traditional web presence and SEO fundamentals carry more weight here than on other platforms. Brands with strong Google search visibility, rich snippets, and well-optimized web pages tend to perform better on Gemini.

Perplexity Sonar: Perplexity combines real-time web search with AI reasoning, making it unique among the major platforms. Because it pulls live data rather than relying solely on training data, recent content and current web presence matter more. Brands that are actively publishing and being discussed online see fresher, more accurate representation on Perplexity.

Tip: Use LLM Brand Boost to track your visibility score per provider and identify weak spots. If you score well on GPT-5.2 but poorly on Claude, it may indicate that your content is too promotional and needs more balanced, nuanced messaging. The multi-provider comparison dashboard makes these gaps immediately visible.

4. Track and Outperform Competitors

Know Your Competitive Landscape

AI recommendations are a zero-sum game in a way that traditional search is not. When a user asks "What's the best CRM for small businesses?", the AI will typically mention three to five brands. If your competitors are in that list and you are not, you are losing potential customers with every query. There is no equivalent of ranking eleventh on Google – you are either in the AI's answer or you are not.

This makes competitive intelligence not just useful, but essential. You need to know which brands the AI mentions alongside (or instead of) yours, how it positions them, and what sentiment it associates with each competitor. This data reveals both threats and opportunities.

Competitive Strategy

- Use competitor tracking to map the AI landscape. LLM Brand Boost lets you monitor which brands AI mentions alongside yours across all four platforms. You can see exactly who your AI competitors are – and they may not be the same as your traditional market competitors.

- Analyze competitor sentiment for opportunities. If the AI describes a competitor as "powerful but complex" or "feature-rich but expensive," these are openings for your brand. Position your content to address exactly those pain points – "simple and intuitive" or "affordable without sacrificing features."

- Track competitor visibility trends over time. A competitor whose visibility is rising rapidly may be executing an effective content strategy that you can learn from. A competitor whose visibility is declining may be creating an opportunity you can capture.

- Identify prompt clusters where you underperform. Maybe you match or beat competitors in discovery prompts but fall behind in recommendation prompts. This granular competitive data tells you exactly where to focus your efforts.

Don't just track – act on insights. If a competitor consistently outranks you in "recommendation" prompts, their content strategy is working better than yours for high-intent queries. Analyze what they're doing differently: Are they featured on more review sites? Do they have stronger case studies? Is their documentation more comprehensive? Use these insights to close the gap.

5. Improve Brand Sentiment

Sentiment Drives Recommendations

AI doesn't just check whether your brand exists in its training data – it evaluates the collective sentiment surrounding your brand and uses that assessment when generating recommendations. A brand with overwhelmingly positive sentiment ("reliable," "innovative," "excellent support") will be recommended more confidently and more frequently than a brand with mixed or negative sentiment ("buggy," "poor customer service," "overpriced").

This is because AI models learn associations between brands and descriptive language. If hundreds of reviews describe your product as "easy to use," the AI internalizes that association and will include it when recommending your brand. Conversely, if common complaints about slow support or missing features appear across multiple sources, the AI will reflect that too – sometimes explicitly mentioning these drawbacks in its response.

How to Improve Sentiment

- Respond to negative reviews publicly and constructively. AI models pick up on resolution patterns. A negative review followed by a thoughtful, solution-oriented response from your team creates a more positive overall signal than an unanswered complaint. It shows that your brand cares about customer experience.

- Publish case studies with measurable results. Nothing shifts sentiment like proof. "Reduced churn by 25% in three months" or "Saved 15 hours per week on reporting" gives the AI – and potential customers – concrete reasons to view your brand positively.

- Encourage authentic user reviews. Volume and authenticity both matter. A steady stream of genuine user reviews across platforms like G2, Capterra, and TrustPilot builds a robust positive signal. Avoid fake reviews – AI models are increasingly capable of detecting inauthentic patterns, and the reputational risk far outweighs any short-term gain.

- Address product issues that drive negative sentiment. If sentiment analysis reveals a recurring theme – for example, "slow onboarding" or "limited integrations" – this is product feedback, not just a marketing problem. Fixing the underlying issue eliminates the negative signal at its source.

- Monitor sentiment trends over time. LLM Brand Boost tracks how AI's perception of your brand evolves week over week. After launching a new feature, publishing a major case study, or resolving a widely-discussed issue, you should see sentiment improvements reflected in subsequent AI responses.

Tip: LLM Brand Boost breaks sentiment into positive, neutral, and negative categories for every prompt where your brand appears. Use this to identify the specific prompts and contexts where negative sentiment surfaces, and address those situations directly.

Measuring Your Progress

Improving AI visibility is not a one-time effort – it is an ongoing process that requires consistent measurement and iteration. Here are the key metrics to track as you implement these strategies:

- Visibility Score trends over time: Your visibility score should increase as you publish more authoritative content and build a stronger multi-source presence. Track this weekly to identify the impact of specific actions – a new case study, a G2 listing, a press mention – on your overall visibility.

- Position improvements across prompt clusters: Monitor your average position separately for discovery, comparison, and recommendation prompts. Improvements in recommendation prompts are the most valuable, as these carry the highest conversion intent.

- Sentiment score changes: Track the ratio of positive to neutral to negative mentions over time. A shift from 60% positive / 30% neutral / 10% negative to 75% positive / 20% neutral / 5% negative represents a meaningful improvement in how AI perceives and presents your brand.

- Source attribution changes: As you build authority across new platforms, LLM Brand Boost's source attribution should reflect new domains driving your AI mentions. If you get featured on a major review site and subsequently see it appear as a source behind your AI recommendations, you know the strategy is working.

- Cross-platform convergence: Over time, your visibility scores across all four AI platforms should converge upward. If one platform lags, investigate the platform-specific insights described in Strategy 3 and tailor your approach accordingly.

LLM Brand Boost's automated weekly tracking schedules make all of this measurement effortless. Set up your brand, competitors, and target prompts once, and the platform monitors everything automatically – delivering weekly reports that show exactly how your AI visibility is evolving.

"The brands that win in AI visibility are the ones that treat it as a continuous discipline, not a one-time project. Monitor, analyze, optimize, repeat."

The shift to AI-powered brand discovery is accelerating. Every week, more consumers turn to AI assistants for recommendations, and every week, the brands that are visible in those responses capture more market share. The five strategies outlined here – creating structured content, building multi-source authority, monitoring across platforms, tracking competitors, and improving sentiment – give you a comprehensive playbook for ensuring your brand is the one AI recommends. Start measuring today, and start winning the AI visibility game.